Large Language Model (LLM)

Direct access to variety of Large language models and many supporting functionalities

We believe that Large Language models (LLMs) like GPT will change how software is used and the way we work. With Relevance, using LLMs is extremely easy, since all the requirements (e.g. access, settings, output handling) are taken cate of.

Communication with LLMs is via natural language and in written format. The piece of text that is used for providing information and instruction to a LLM is called a “Prompt”. Each time you use an LLM, you will need to

- write up a good prompt

- choose a model

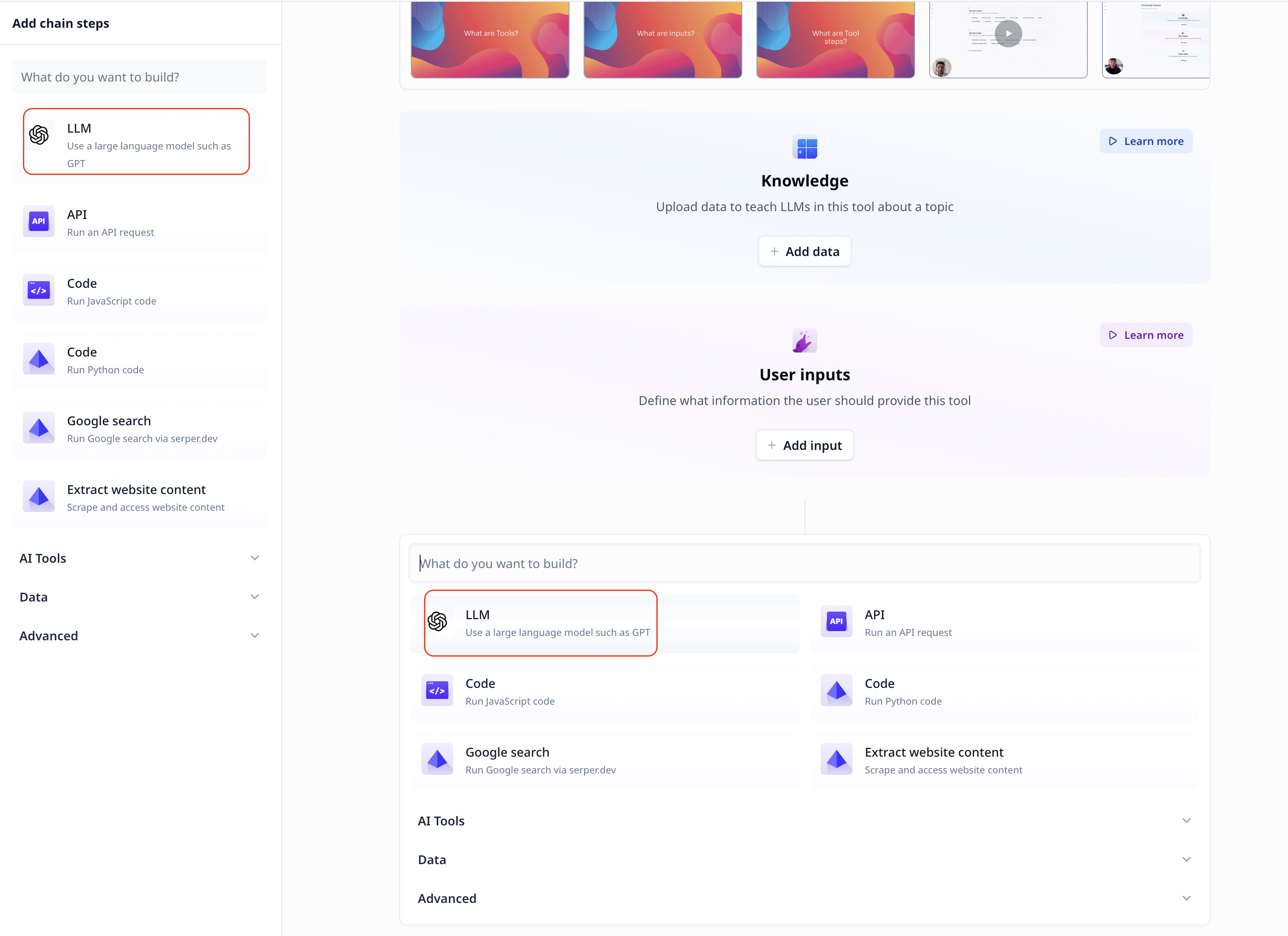

How to use an LLM step

To use an LLM you need to add a “LLMs” step to your Tool (check how to get started with

creating a tool).

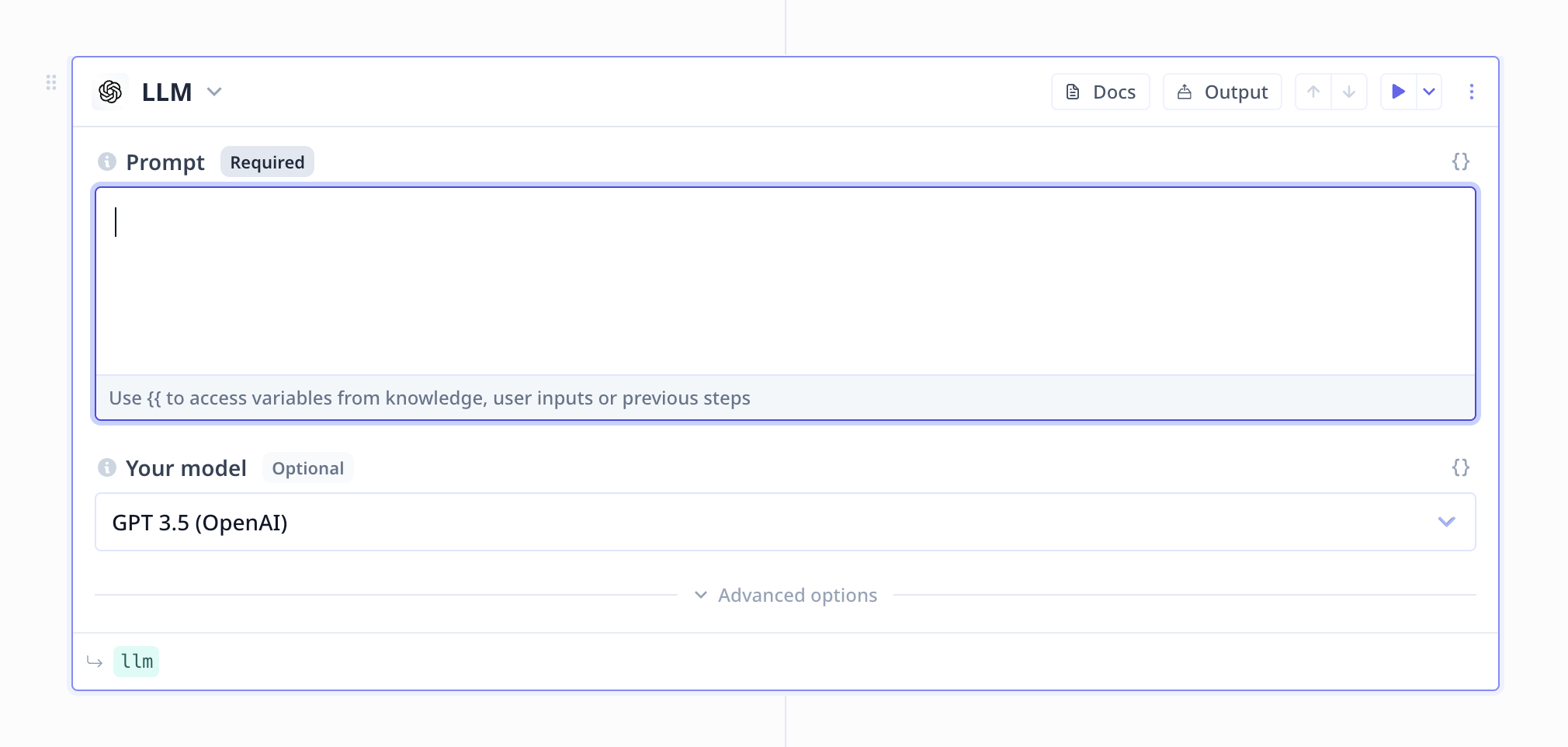

You can then choose the model you want to use, and write up your prompt in the base window.

Prompt

A prompt is a written text that includes the information you want to provide to a language model as well as the instruction and expectation. It is important to be clear and explicit. Notes on prompt engineering with real samples are provided at How to write a good prompt.

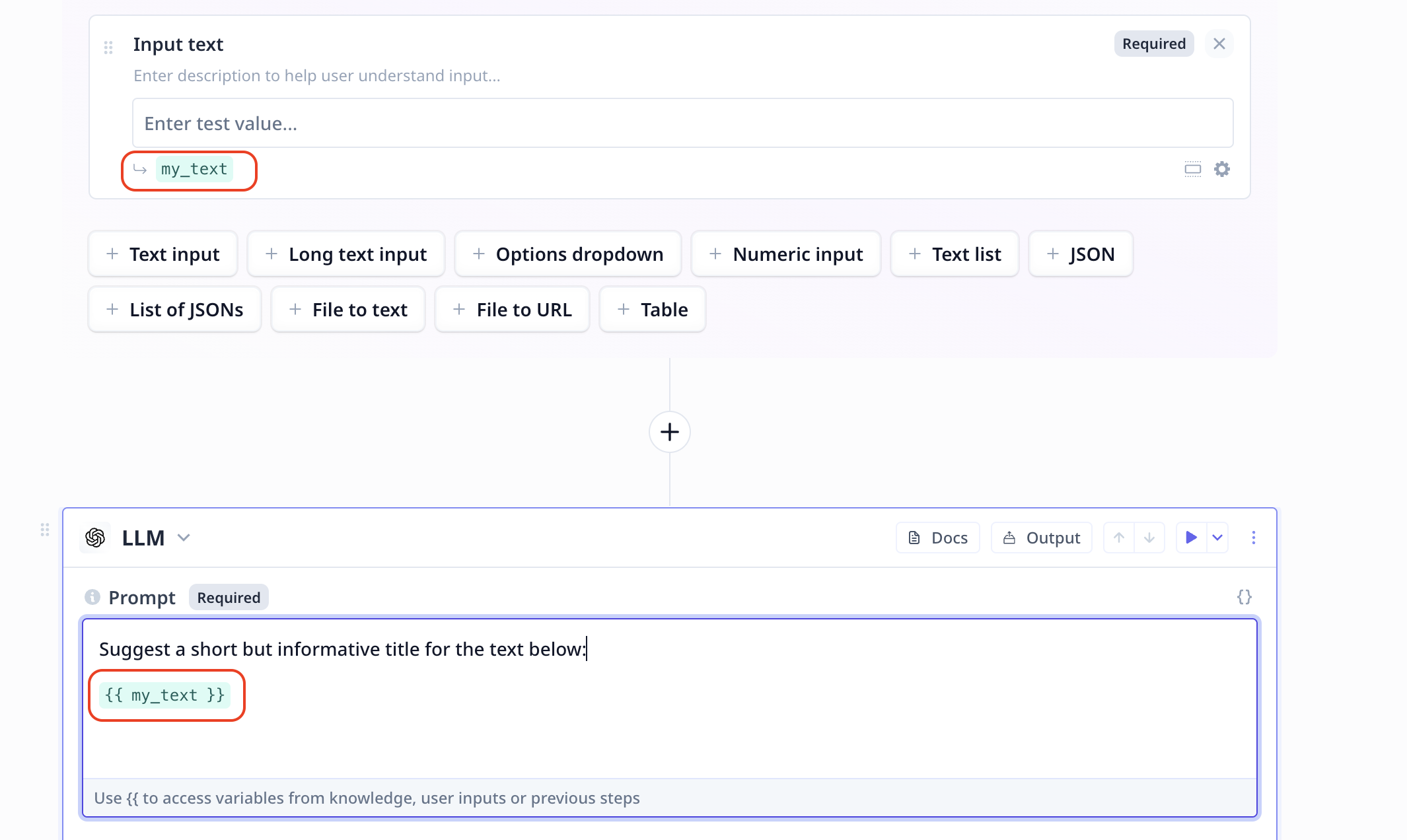

Access to input variables and other step outputs

The prompt input accepts both regular text and variable templating using {{}} syntax.

For instance is there is an input variable called “my_text”, you can included it in the prompt using {{my_text}}

Start entering a variable name, you will see a list of available variables to choose from.

Model

To use a model to which you have subscribed, make sure to add your API key from the provider.

Otherwise you will be using Relevance keys and it will be calculated in the used credit costs.

We provide support for not just GPT, but other vendors such as Cohere and Anthropic. We are always adding to this list. Implement once, with the knowledge that as new models come out, your product can take advantage!

| Model name | Model ID | Provider | Model specifics |

|---|---|---|---|

| GPT 4 | openai-gpt4 | OpenAI | English data, larger context (compared to GPT 3.5), Strong reasoning, Coding, Layout, 200+ output languages |

| GPT 4 NEW | openai-gpt4-0613 | OpenAI | GPT 3.5 new version (improved accuracy) |

| GPT 3.5 | openai-gpt35 | OpenAI | English data, Medium context, Simple reasoning, Coding, Layout |

| GPT 3.5 NEW | openai-gpt35-0613 | OpenAI | GPT 3.5 new version (improved accuracy) |

| GPT 3.5 16k | openai-gpt35-16k | OpenAI | GPT 3.5 with increased context window |

| Claude | anthropic-claude-v1 | Anthropic | Large context, Strong in parsing large texts and documents |

| Claude (100k) | anthropic-claude-v1-100k | Anthropic | Claude with increased context window |

| Claude Instant | anthropic-claude-instant-v1 | Anthropic | Anthropic’s fastest model |

| Claude Instant (100k) | anthropic-claude-instant-v1-100k | Anthropic | Anthropic’s fastest model with increased context window |

| Text Bison | palm-text-bison | Palm | PaLM 2 model, Fast, Strong in tasks such as sentiment analysis, entity extraction, and content creation |

| Chat Bison | palm-chat-bison | Palm | PaLM 2 model, Fast, Strong in tasks such as language understanding, language generation, and conversations |

| Command | cohere-command | Cohere | Cohere model, supports over 100 languages |

| Command Light | cohere-command-light | Cohere | Cohere model, easy to retrain |

In the next pages, we will explain about more advanced settings for your LLM component:

- Conversation history

- System prompt

- Temperature

- Validators

- How to handle large amount of text/context

Common errors

Prompt is too long. Please reduce prompt in length.

The error message below indicate that the provided prompt includes more tokens than what the choses model allows. To resolve the issue, you can use a model that supports higher number of tokens. For large text inputs, Relevance provides you with techniques to automatically keep the tokens withing the accepted range; more information is available at How to handle to much text.

1. 400: {"message":"aviary.backend.llm.error_handling.PromptTooLongError: Input too long. Received 5002 tokens, but the maximum input length is

4090 tokens.","internal_message":"aviary.backend.server.openai_compat.openai_exception.OpenAIHTTPException","code":400,"type":"PromptTooLongError","param":{}}

2. Token limit for each model

Validation

When there are output validations set for an LLM, Relevance automatically checks the output to confirm the validity. If the output does not match the required setup, the below error will raise. The best solution is to improve your prompt with more explanation or examples.

Prompt completion did not pass validation

Too large data

When using Relevance to handle large inputs by selecting the most relevant entries, if the input data is too large, you need to upload it as a dataset use it as a knowledge in your Tool. Maximun size for non-knowledge data is 131,072 tokens (~90kb).

Data is too large for the 'Most relevant data'. Consider adding the data to knowledge.

Rate limit

This error happens when the used API key is set to a different rate limit compared to what Relevance uses by default. Trying again with different intervals of pause helps with this issue.

429: {"message":"Rate limit reached for default-gpt-4 in organization org-... on tokens per min. Limit: 40000 / min.

Please try again in 1ms. Contact us through our help center at help.openai.com if you continue to have issues.",

"type":"tokens","param":null,"code":"rate_limit_exceeded"}

Negative credits

The following error indicates that the credits are below zero and you need to top up to be able to continue using the platform.

Organization Entitlement setting negative_credits : {"limit":0} does not allow this action.

LLM run rate

This error happens when the used API key is set to a different limit compared to what Relevance uses by default. Trying again with a longer pause between each run helps with this issue.

The maximum number of LLM runs per minute for your OpenAI Plan has been reached. If you are using your own OpenAI Key, please either delete your key to use Relevances account, or upgrade your OpenAI Plan.429: {"message":"You exceeded your current quota, please check your plan and billing details.","type":"insufficient_quota","param":null,"code":"insufficient_quota"}

Temperature

There is a temperature parameter under LLM advance setting. The below error occurs if the entered value is out of the accepted (0,1) range.

400: {"message":"-0.5 is less than the minimum of 0 - 'temperature'","type":"invalid_request_error","param":null,"code":null}

History

This error occurs if History is set to an empty array. Either enter values, or use the X button on the right side of each row to remove the empty rows.

Studio transformation prompt_completion input validation error: must be array {"type":"array"} /history

Plan limitations

The below error occurs when GPT-4 is used under a Relevance account with a plan that does not support the GPT-4 model.

Organization Entitlement setting premium_llm_generation : {"limit":false} does not allow this action.

Was this page helpful?